The $2M Lesson From Vercel: How One AI Chrome Extension Became a Supply Chain Breach

On April 19, 2026, Vercel — the cloud platform that hosts millions of web applications, including a substantial share of the modern internet — disclosed a security incident. The entry point wasn’t a zero-day. It wasn’t a phishing email. It wasn’t a misconfigured firewall.

It was an AI Chrome extension one employee had installed.

Within days, an internal Vercel database was listed for sale on BreachForums for $2 million. The kill chain was almost embarrassingly simple, and it’s the exact same chain sitting inside every SMB right now where employees use AI tools.

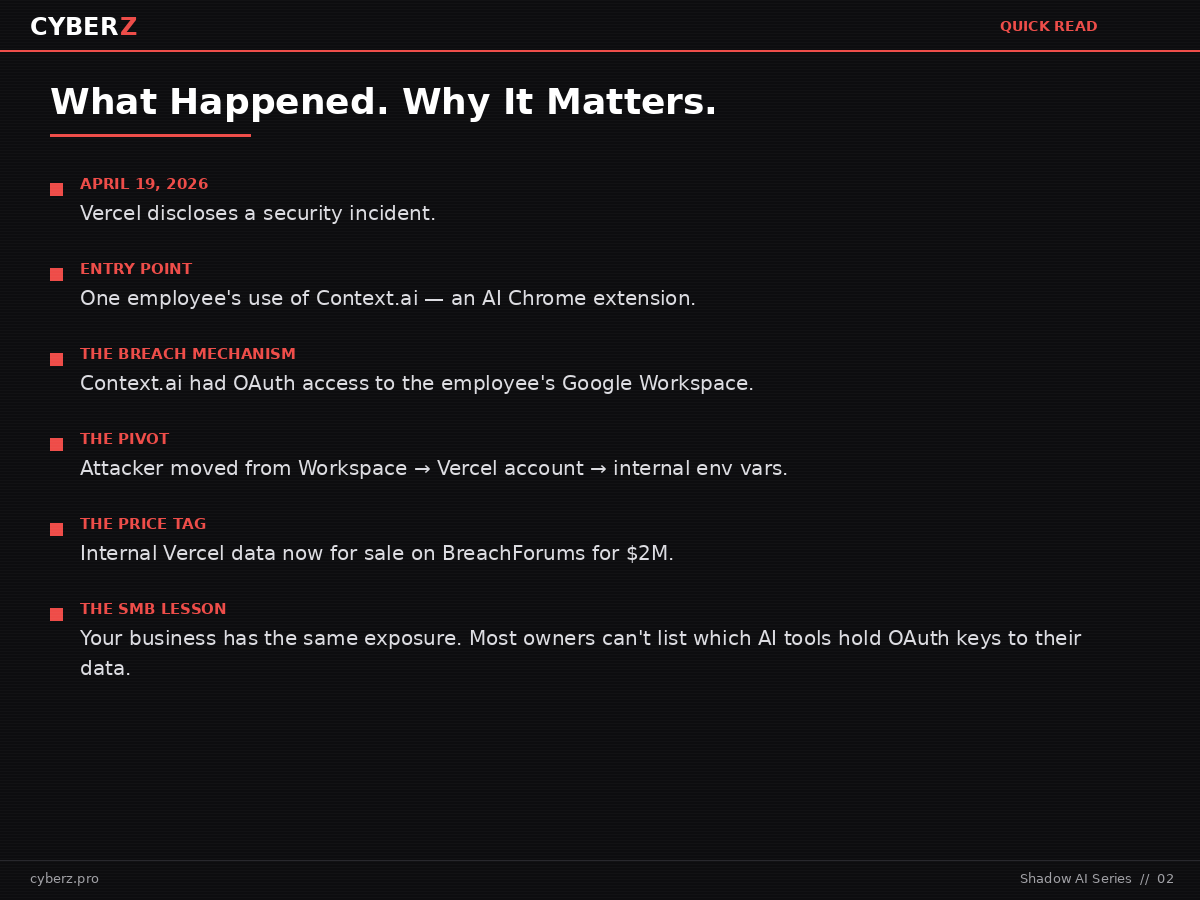

Quick Read — What Happened and Why It Matters

- On April 19, 2026, Vercel disclosed a security incident that began with a third-party AI tool installed by a single employee.

- The AI tool — Context.ai — had been granted OAuth access to the employee’s Google Workspace account. When Context.ai was compromised, that access became the attacker’s front door.

- From there, the attacker pivoted into Vercel’s environment and exfiltrated internal data. An internal Vercel database was listed for sale on BreachForums for $2 million.

- Vercel is a sophisticated tech company. The thing that failed wasn’t their infrastructure. It was a single OAuth grant to an AI tool no one had inventoried.

- If you run an SMB and your employees use AI tools, you have the same exposure right now. Most owners cannot list which AI tools have access to their data — and that is the entire problem.

What Actually Happened at Vercel

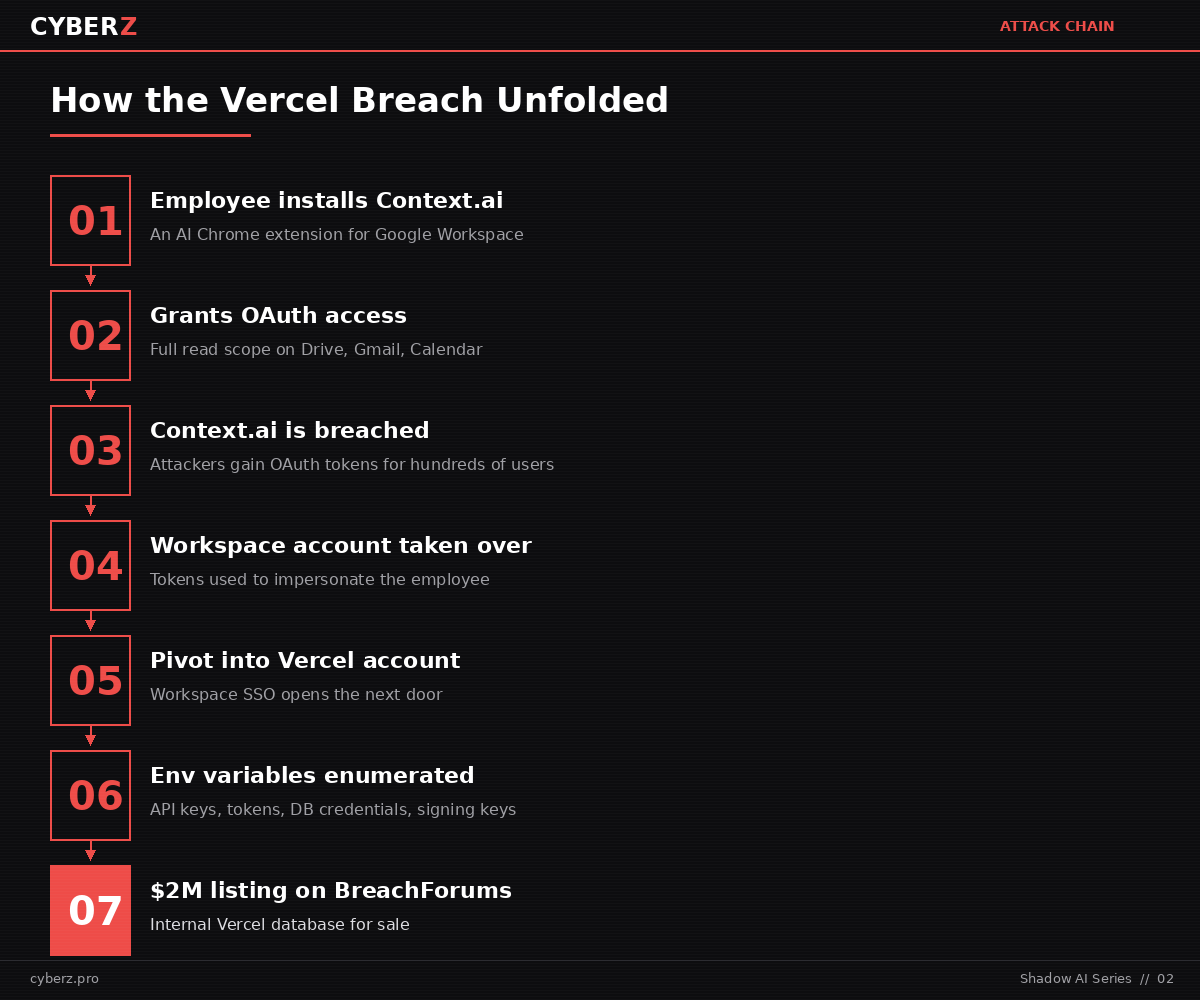

Vercel published a security bulletin disclosing unauthorized access to internal systems. The sequence, in plain language:

- A Vercel employee was using Context.ai, a third-party AI tool. To make it work, they logged in with their Google Workspace account and granted it OAuth access — meaning the tool could read data on their behalf.

- Context.ai got breached. Attackers gained access to the OAuth tokens that Context.ai held for its users.

- The attacker used those tokens to take over the Vercel employee’s individual Google Workspace account.

- From the compromised Workspace account, the attacker pivoted into the employee’s Vercel account.

- From the Vercel account, they moved into Vercel’s environment and enumerated and decrypted non-sensitive environment variables — the kind of values that include API keys, tokens, database credentials, and signing keys when they’re not specifically marked as sensitive.

- Stolen Vercel data was advertised on BreachForums, a known marketplace for stolen credentials, for $2 million.

Vercel’s own bulletin describes the attacker as “highly sophisticated based on their operational velocity and in-depth understanding of Vercel’s product API surface.” That phrasing matters. The entry was banal — an OAuth grant any employee can create in two clicks — but what came after was the work of someone who knew exactly what they were doing once they were inside.

Vercel has confirmed that no npm packages they publish were tampered with, and the wider JavaScript supply chain remains intact. That’s the good news. The bad news is the affected scope of Context.ai’s OAuth compromise is, in Vercel’s words, “potentially affecting its hundreds of users across many organizations.” Vercel was one. There are others.

Why This Isn’t a “Big Tech Problem”

The natural reaction is: Vercel is huge, we’re not, this doesn’t apply to us. The opposite is true.

Vercel runs a real security program. They publish security bulletins, they engage Mandiant, they coordinate with GitHub, Microsoft, npm, and Socket. They have the staff and the budget and the institutional knowledge that almost no SMB has.

And it still happened — because the thing that failed wasn’t a Vercel system. It was an OAuth grant from one employee to one AI tool that Vercel almost certainly didn’t have on any official inventory. That’s the part that translates directly to a 30-person accounting firm, a 50-person law office, a 20-person healthcare practice, or a CMMC-tracked defense subcontractor.

If Vercel’s controls didn’t catch this, the dental practice with eighteen employees and no IT staff has zero chance of catching it.

According to Reco AI’s 2025 breach review, shadow AI incidents add an average of $670,000 to the cost of a breach compared to incidents that don’t involve unauthorized AI tools. That’s the cost of the AI tool no one approved being part of the kill chain.

The Shadow AI Pattern You’re Already Living With

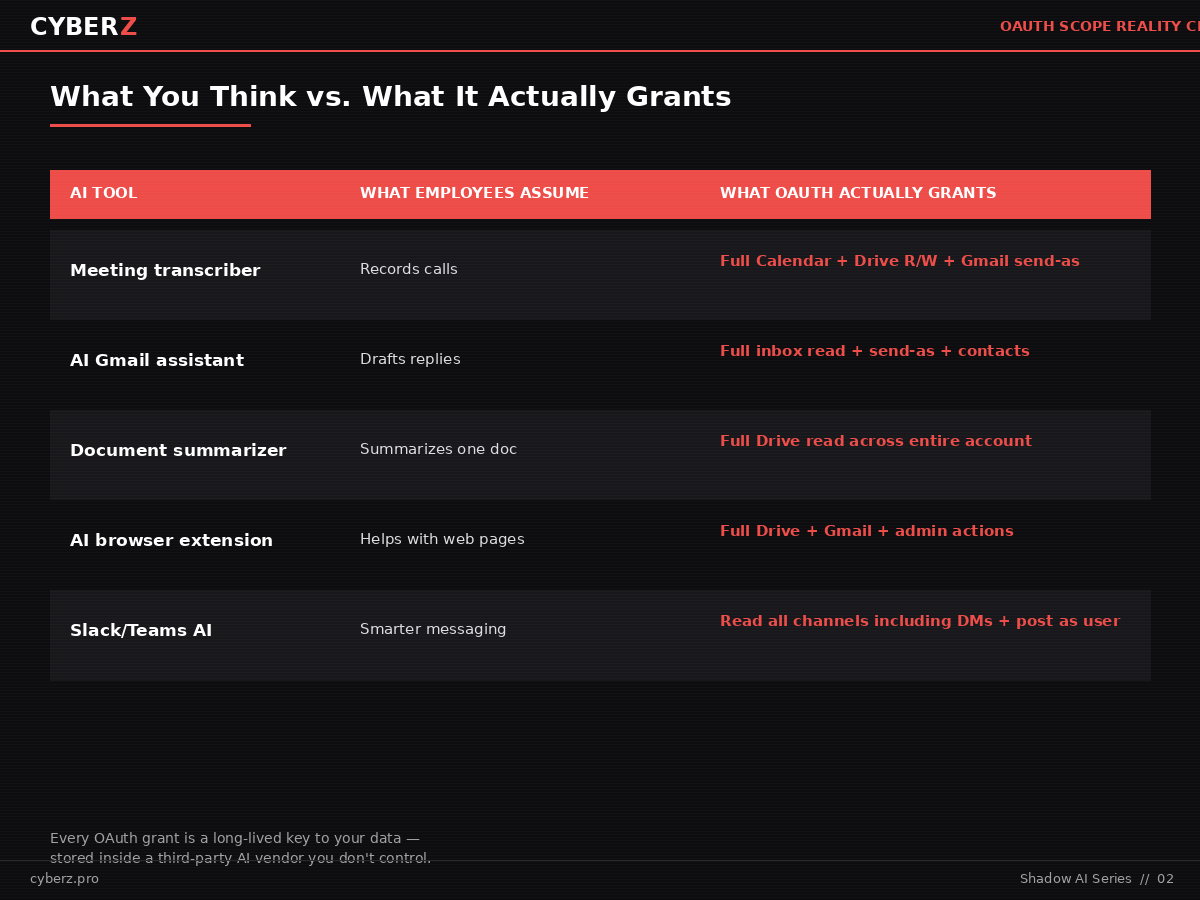

Walk through what your employees are doing right now, today, without telling you:

- Installing AI Chrome extensions that summarize web pages, transcribe meetings, write email drafts, or “make Gmail smarter.”

- Connecting AI tools to Google Workspace or Microsoft 365 with one click — and granting whatever OAuth scope the tool requests.

- Using AI research assistants that need access to their Drive to “remember context.”

- Trying out the latest AI-for-X tool because they saw it on LinkedIn and the free tier looked useful.

Each of these is an OAuth grant. Each OAuth grant is a long-lived key to your data that lives inside a third party’s infrastructure, far outside any control you have.

The economics of this for an attacker are devastatingly good. They don’t need to breach you. They need to breach one of the AI vendors your employees trust. From there, every customer of that vendor becomes a potential target with the OAuth scope already in place. That is what happened to Vercel — and Vercel was, by their own assessment, one of “hundreds” of organizations potentially affected by the Context.ai compromise.

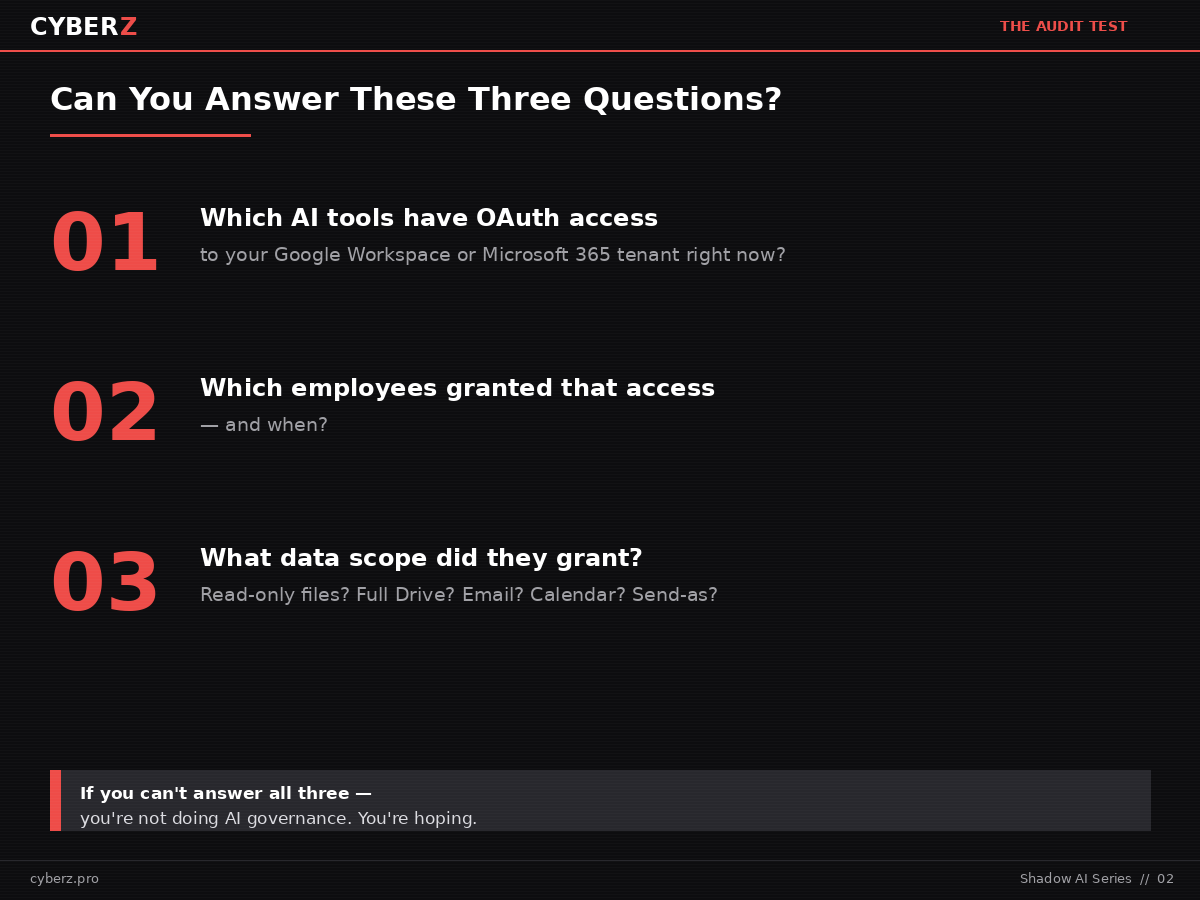

The Three Questions Every SMB Owner Should Be Able to Answer This Week

Stop reading for a second. Try to answer these out loud:

- Which AI tools have OAuth access to your Google Workspace or Microsoft 365 tenant right now?

- Which employees granted that access — and when?

- What data scope did they grant? Read-only on individual files? Full Drive? Email? Calendar? Send-as?

Most SMB owners cannot answer any of the three. Some can’t even answer the first one. That gap — between “we use AI responsibly” and “we have no inventory of AI tools with keys to our data” — is the entire shadow AI problem in one paragraph.

The good news is that closing the gap is mostly free. It’s an audit, not a product purchase. The bad news is that almost no one runs it until they have to.

What To Do This Week

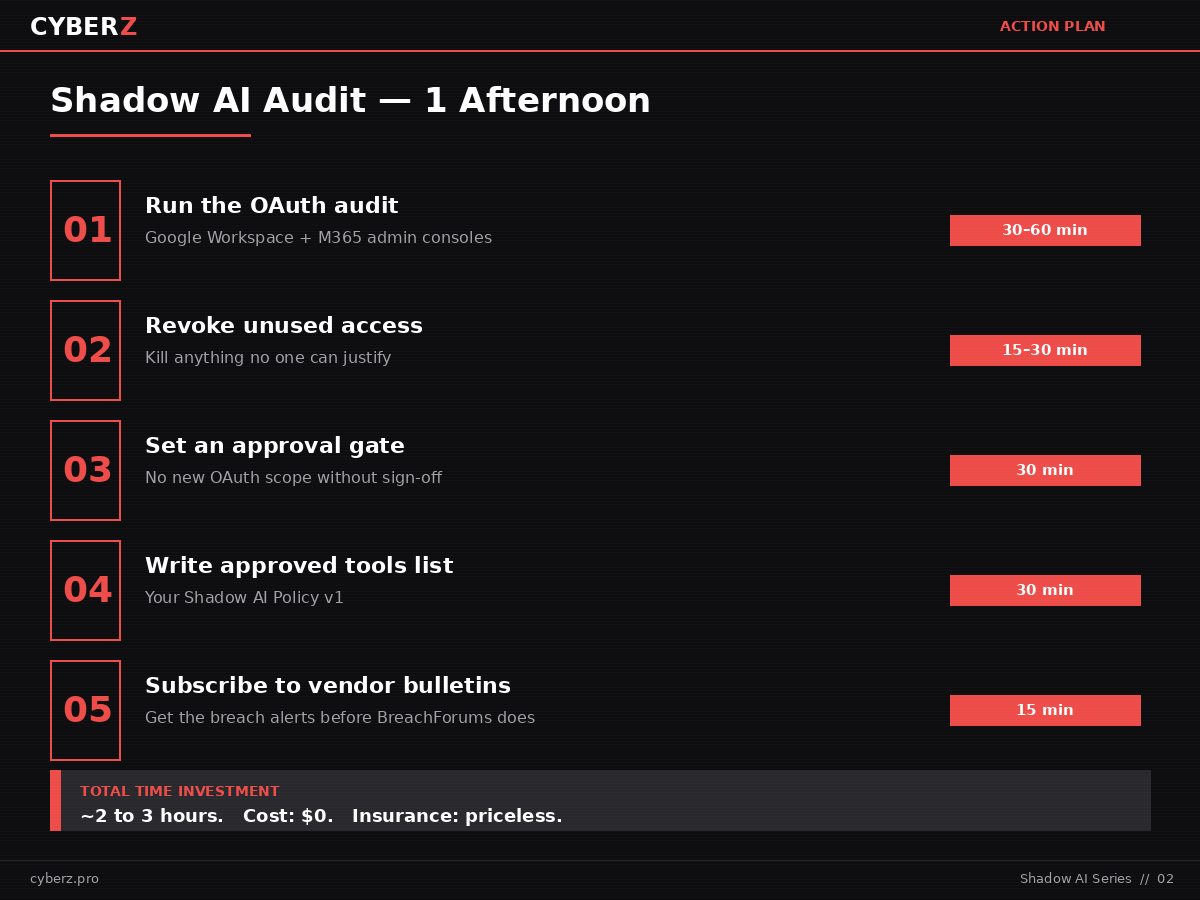

You can run a basic shadow AI audit in an afternoon. Here is the order:

Step 1 — Run the OAuth audit (30–60 minutes).

In Google Workspace: Admin Console → Security → Access and data control → API controls → App access control. Look at “Configured apps” and “Connected apps.” Note every third-party app and the scopes it has been granted.

In Microsoft 365: Entra Admin Center → Enterprise applications → All applications. Filter for third-party apps with delegated or application permissions. Note every AI tool and the permissions it has.

You’re looking for any AI-related tool — anything with “AI,” “assistant,” “GPT,” “Claude,” “Copilot” (third-party, not Microsoft’s), “summarizer,” “transcribe,” or “research” in its name. There will be more than you expect.

Step 2 — Revoke unused AI app access (15–30 minutes).

For every app on the list, ask one question: Can any employee tell me the business reason this tool needs access? If no one can name it, kill the access. Force a re-request if the tool is genuinely needed.

Step 3 — Set an approval gate (30 minutes).

Going forward, no AI tool gets OAuth scope above “read individual file” without owner or IT sign-off. Write this down. Email it to your team. Pin it in Slack. The point isn’t bureaucracy — it’s that someone outside the requesting employee has to look at the scope being granted before it happens.

Step 4 — Write your approved AI tools list (30 minutes).

A one-page document is better than nothing. List every AI tool your business approves for work use, and the scope each one is allowed to request. This becomes your shadow AI policy v1. You can formalize it later.

Step 5 — Subscribe to vendor security bulletins (15 minutes).

For every AI tool with OAuth scope into your tenant, find and subscribe to its security bulletin or status page. Most have them. Almost nobody reads them — until a Vercel-style incident hits and you find out you were on the list a week late.

The TAISE Angle

The Trustworthy AI Security Engineering framework — developed by the Cloud Security Alliance and Northeastern University, and recognized with a 2026 SC Award — opens with two modules that map directly to this incident.

Module 1 is AI inventory: knowing every AI tool, agent, model, and integration in your environment. The Vercel breach started precisely because Context.ai wasn’t on any inventory the company maintained.

Module 2 is shadow AI discovery: the systematic, ongoing process of finding the AI tools employees adopt on their own — before they become part of an attack chain.

If you’ve read Part 1 of this series, this incident is the worked example of why that framework matters. TAISE isn’t academic. It’s a checklist for not being Vercel’s next case study.

The Bottom Line

Shadow AI is not a future problem. It is already inside your business, in the form of OAuth grants your employees made to AI tools you have not inventoried. Vercel found out the hard way, with a $2 million asking price on a stolen database and a four-paragraph security bulletin to write.

The fix is mostly free. It’s an OAuth audit, an approval gate, and a one-page policy you can write before lunch. The Vercel incident is the easiest case study you’ll ever have for getting your leadership team to take this seriously.

The companies that don’t run the audit aren’t going to be safer. They’re just going to find out about their shadow AI problem the same way Vercel did.

Sources

- Vercel, “Vercel April 2026 security incident” official security bulletin (April 24, 2026 update)

- Ox Security, “Vercel Breached via Context AI Supply Chain Attack” (April 2026)

- Reco AI, “AI & Cloud Security Breaches: 2025 Year in Review” (March 2026)

- Cloud Security Alliance & Northeastern University, “TAISE Framework” (2026)

- Infosecurity Magazine, “Researchers Uncover 10 In-the-Wild Indirect Prompt Injection Attacks” (April 2026)

Zvi Melkman is an AI & cybersecurity consultant for SMBs and the author of the CyberZ series. He helps healthcare, legal, accounting, and defense-sector firms adopt AI without creating compliance nightmares.

Don't know which AI tools have OAuth keys to your data?

The Shadow AI Risk Assessment Kit walks you through the exact OAuth audit described above — discovery templates, risk scoring, revoke-or-keep decision matrix, and a prioritized action plan you can run in an afternoon.

Get the Kit →