What AI Is Actually Doing Inside Your Business — And Why It's a Security Problem

Your employees are using AI right now. Not the AI tools you approved — the ones they found on their own.

An IBM study found that 80% of American office workers use AI in their roles, but only 22% rely exclusively on employer-provided tools. The rest? They’re using personal ChatGPT accounts, free Copilot tiers, and whatever browser extension promised to “summarize this email in one click.”

This isn’t a technology problem. It’s a visibility problem. And for a small business in healthcare, legal, or accounting — where client data is regulated — it’s a compliance problem you might not know exists until an auditor or a breach tells you.

The Shadow AI Problem Is Bigger Than You Think

“Shadow AI” is the term for AI tools employees use without IT knowledge or approval. Think of it as shadow IT’s more dangerous sibling — because unlike unauthorized Dropbox accounts, AI tools don’t just store your data. They process it, learn from it, and in some cases, retain it.

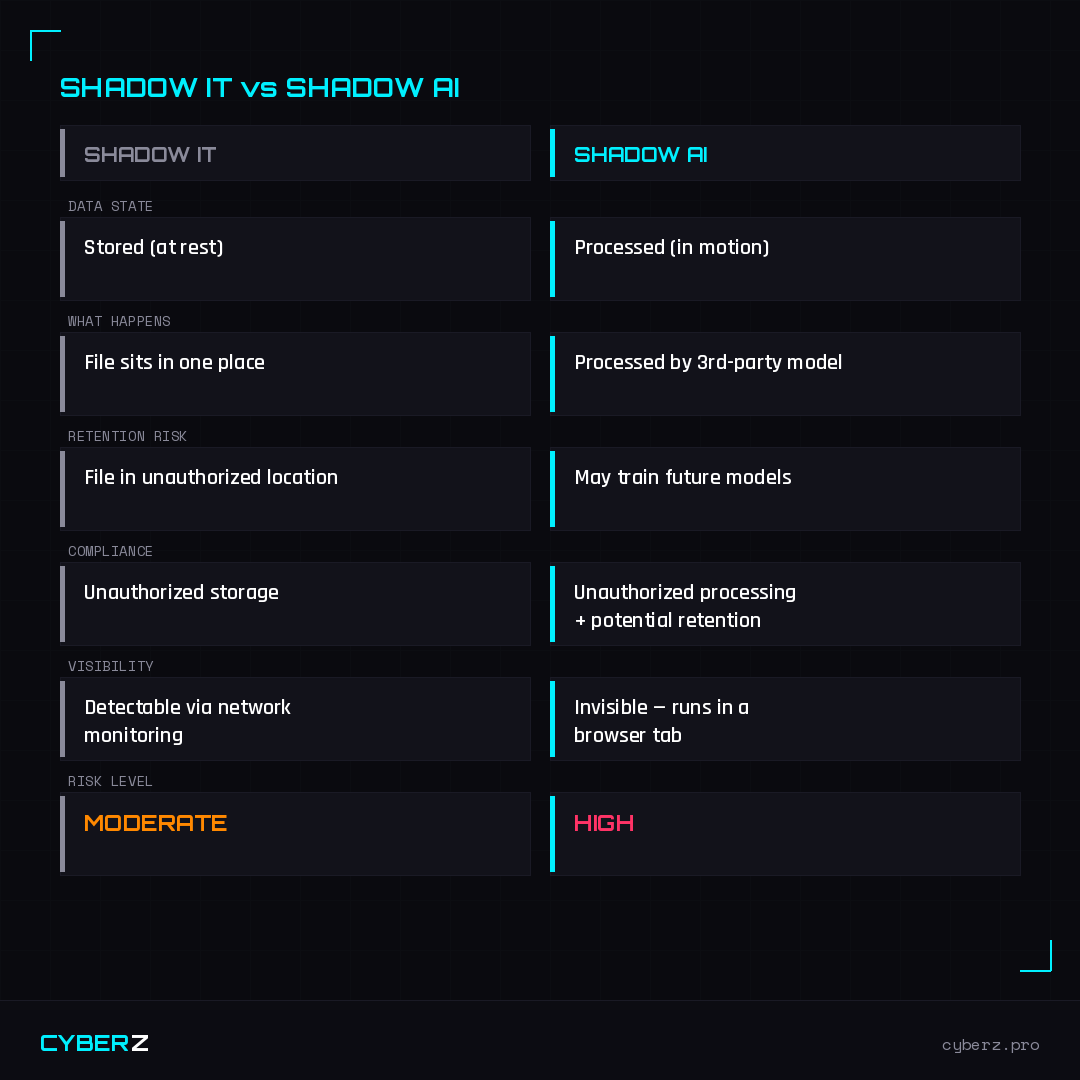

Here’s what makes shadow AI fundamentally different from the shadow IT problems of the last decade:

When an employee uploads a contract to an unauthorized cloud folder, the document sits in one place. When they paste that same contract into ChatGPT asking “summarize the key obligations,” that text has been processed by a third-party model with its own data handling policies. Shadow IT stores your data in the wrong place. Shadow AI processes your data through a system you don’t control — and depending on the tool and plan, that data could be used to train future models.

The compliance trigger is different too. Shadow IT creates an unauthorized storage problem. Shadow AI creates unauthorized processing and potential retention — and it’s nearly invisible, running in a browser tab with no network footprint to detect.

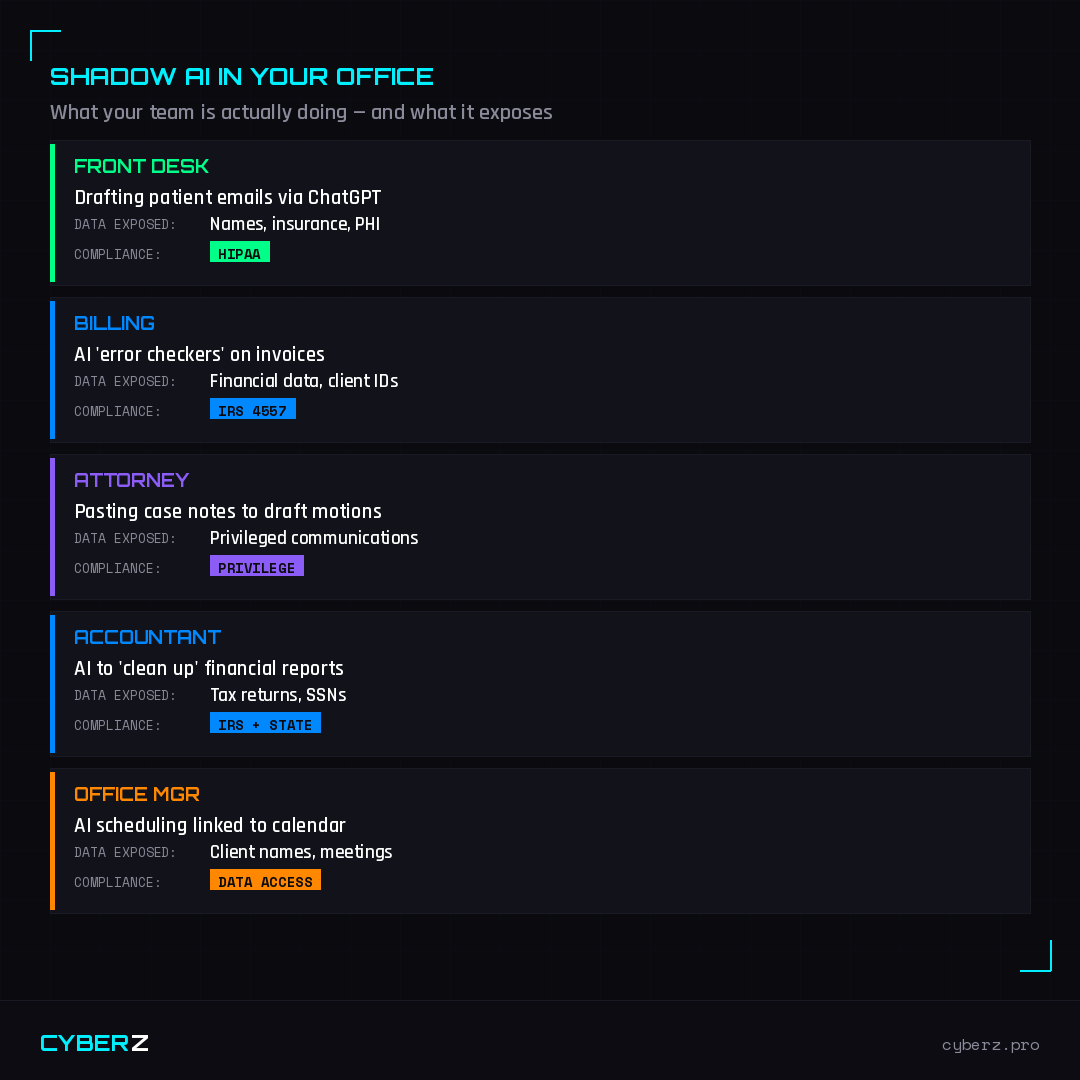

What Your Team Is Actually Doing With AI

Let’s be specific. Here’s what shadow AI looks like inside a typical 30-person firm:

None of these people are doing anything malicious. They’re trying to work faster. But every one of these actions creates a data exposure event that could trigger a regulatory violation.

Why Small Businesses Are More Exposed

Enterprise companies have dedicated security teams, AI governance committees, and the budget to deploy approved AI tools with proper data controls. A 30-person medical practice has none of that. But small businesses face higher per-employee risk from shadow AI, and it comes down to three factors.

No AI policy exists. Without a written acceptable use policy, employees have no guardrails — and the business has no legal defense if something goes wrong.

Free tools dominate. Free-tier AI tools almost universally have weaker data protections. Many explicitly state in their terms of service that user inputs may be used for model training.

Nobody’s watching. In a 20-person firm, there’s often no IT function at all — and the “tech person” is whoever is youngest in the office.

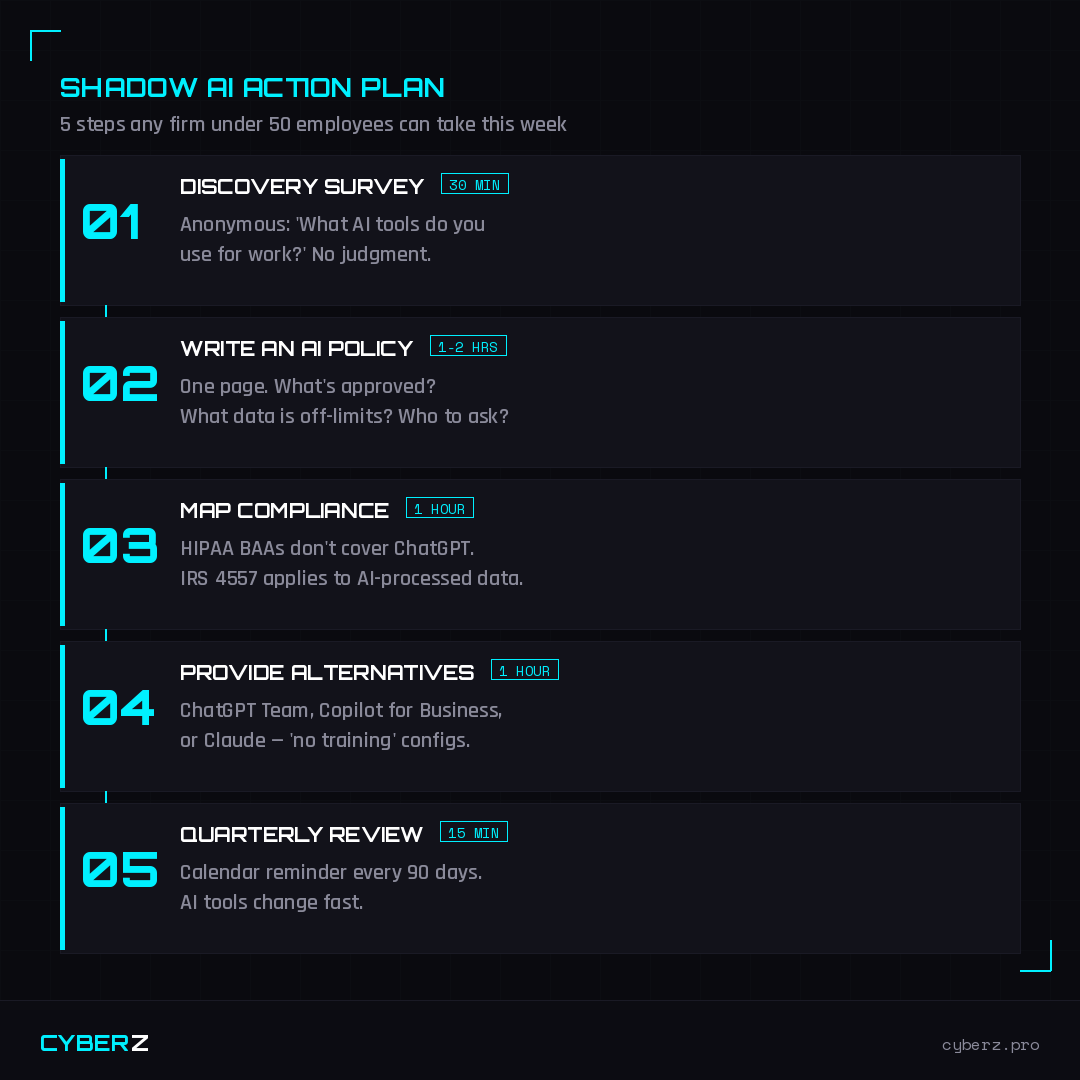

What To Do About It This Week

You don’t need a six-figure security budget. Here’s a realistic 5-step action plan for a firm under 50 employees:

Step 1 — Discovery survey (30 min to create). Send an anonymous survey: “What AI tools do you use for work?” No judgment — the goal is visibility, not punishment.

Step 2 — Write an AI acceptable use policy (1–2 hours). One page. Three questions: What tools are approved? What data is off-limits? Who do you ask when you’re unsure?

Step 3 — Map your compliance obligations (1 hour). HIPAA BAAs don’t cover ChatGPT. Bar ethics rules apply to AI-processed case data. IRS Pub 4557 applies to AI-processed taxpayer information.

Step 4 — Provide approved alternatives (1 hour setup). Give your team tools with proper data controls — ChatGPT Team, Copilot for Business, or Claude for Work all offer “no training on your data” configurations.

Step 5 — Set a quarterly review cadence (15 min). Calendar reminder every 90 days. AI tools change fast — your policy needs to keep up.

The Bottom Line

AI isn’t the enemy. Invisible AI is. Your team is already using these tools — the question is whether you know which ones, what data they’re feeding into them, and whether any of it triggers a compliance obligation.

The businesses that will avoid the coming wave of AI-related compliance actions aren’t the ones that banned AI. They’re the ones that got visibility into what was already happening and put practical guardrails in place before a regulator or a breach forced them to.

This is Part 1 of an 8-part series on AI security for small businesses. Next week: ChatGPT, Copilot, AI Agents — What Your Team Is Already Using.

Sources

- IBM / Censuswide, “Rising AI Adoption Creating Shadow Risks” (February 2026)

- SQ Magazine, “Shadow AI Usage Statistics 2026” (April 2026)

- Second Talent, “Top 50 Shadow AI Statistics 2026” (March 2026)

- BlackFog, “The Rise of Shadow AI” (February 2026)

- JumpCloud, “11 Stats About Shadow AI in 2026” (February 2026)

Zvi Melkman is an AI & cybersecurity consultant for SMBs and the author of the CyberZ series. He helps healthcare, legal, and accounting firms adopt AI without creating compliance nightmares.

Not sure what AI tools your team is using?

The Shadow AI Risk Assessment Kit walks you through a full discovery process — survey templates, risk scoring, and a prioritized action plan.

Get the Kit →